Introduction

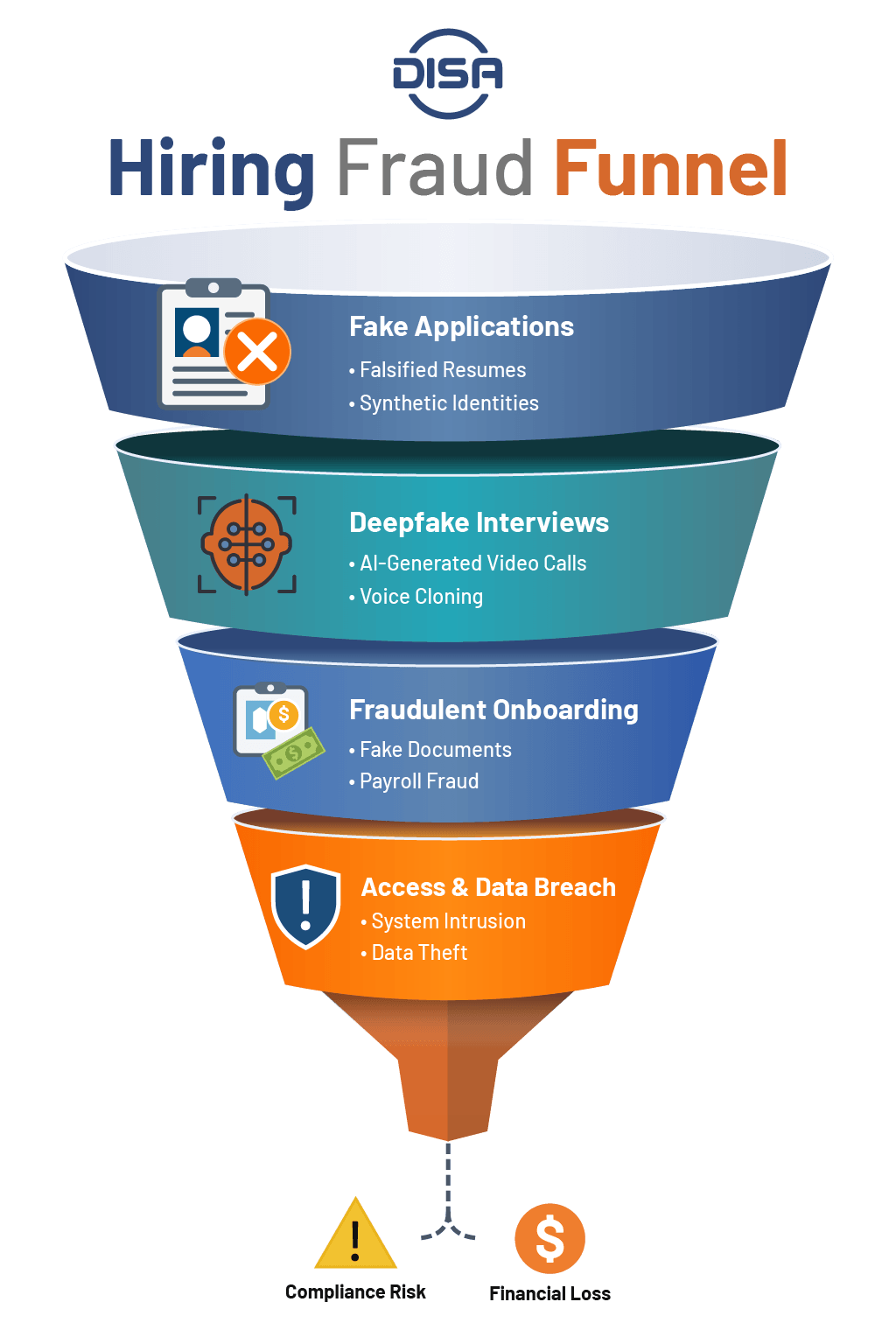

The recruitment landscape has entered uncharted territory: Generative AI tools have made it incredibly cheap and easy for bad actors to bypass traditional recruitment guardrails. And the combination of accessible technology, remote work models, and fragmented HR processes has created a vulnerable environment where hiring fraud can thrive. As organizations scale remote hiring, traditional pre-employment screening and background checks alone are no longer enough to prevent sophisticated fraud.

When a fraudulent hire successfully navigates the system, organizations can suddenly face access vulnerabilities, payroll diversion, and severe data breaches. Plus, fake job candidates can expose the company to significant compliance risks. Without stronger employment verification and identity controls, companies face growing HR compliance risk, including data breaches and regulatory exposure.

What is AI-Powered Hiring Fraud?

AI-powered hiring fraud is the use of artificial intelligence to create fake candidate identities, manipulate interviews, or bypass hiring verification systems.

How it differs from “resume exaggeration”

Candidates in many different industries have stretched the truth on their resumes, perhaps by extending employment dates or inflating a job title. AI-powered hiring fraud extends far beyond those little white lies by engaging in the wholesale fabrication of a candidate's persona. Malicious actors can use generative AI to draft perfect cover letters, manufacture fake portfolios, and even alter live video feeds.

Why it’s an identity + trust problem now

Hiring fraud is now fundamentally an identity and trust problem: If human resources cannot definitively prove that the person on the screen is the person who submitted the application, the entire chain of trust breaks. Consequently, HR teams need to establish a verified identity at the very beginning of the employment lifecycle, because relying solely on a standard background check without robust identity verification leaves doors open to synthetic identities. Unlike traditional discrepancies caught during background checks, AI-driven fraud can bypass standard workforce screening processes entirely.

Why AI Is Targeting HR

Remote hiring + fragmented handoffs

The widespread adoption of remote work removes the physical safeguards of in-person interviews, which makes it harder to visually confirm a candidate's identity. Plus, the fragmented handoffs between talent acquisition, hiring managers, and IT departments create critical communication and visibility gaps that scammers can exploit.

When different teams handle different parts of the onboarding process without a unified identity thread, onboarding fraud can slip through the cracks.

More digital identity footprints to exploit

Individuals generate massive digital footprints, which threat actors can then peruse through (via professional directories and public databases) to build convincing profiles for fake job candidates. Then, by combining the stolen data with AI generation, they can create synthetic identities that look perfect on paper.

Because HR teams rely heavily on these same digital footprints to source talent, distinguishing between a legitimate professional and a carefully constructed fake has become exceedingly difficult.

Deepfake Interview Fraud: How It Works and How to Detect It

Common deepfake interview tactics (video, voice, proxies)

A deepfake interview involves manipulating audio and visual feeds in real-time to deceive recruiters.

Cybercriminals use sophisticated software to map a fake face over their own during video calls, along with voice cloning technology to sound like a native speaker or match a specific gender and age profile. In some cases, a highly technical proxy can sit off-camera to feed answers to the visible applicant. These deepfake interviews help bypass technical screenings by ensuring the visually fabricated "candidate" provides perfect answers.

Recruiter red flags and “tell” patterns

Despite technological advancements, deepfake interviews often exhibit certain detectable (if subtle) flaws:

- Visual artifacts, such as blurring around the edges of the face or unnatural eye movements

- Audio delays, where the lip movements do not perfectly match the spoken words

- Candidates who refuse to turn on their camera, citing persistent "bandwidth issues”

Talent acquisition teams should receive training to recognize these "tell" patterns as a crucial first line of defense against hiring fraud.

What to do if you suspect impersonation (in-the-moment steps)

If a recruiter suspects they are speaking with a fake candidate during a live session, they should take the following steps:

- Maintain a neutral demeanor to avoid alerting the bad actor.

- Ask the candidate to perform a physical action, such as turning their head completely to the side or passing a hand in front of their face (these movements often break the deepfake tracking software).

- Prompt the candidate with specific, highly contextual questions about their past experience that a proxy would not quickly know.

If the suspicion persists, your recruiter should politely conclude the interview and immediately escalate the issue to the IT security team.

What You Should Do If You Find a Fake Candidate

Preserve evidence and pause the process safely

From an HR compliance risk perspective, if you suspect hiring fraud, it’s critical to contain the situation quickly and follow documented response protocols. Immediately pause the candidate's progression through the pipeline. Do not alert the applicant that they are under investigation, as this may cause them to destroy evidence or launch retaliatory cyberattacks.

Preserve all communication logs, resume files, and interview recordings. Document the specific anomalies or red flags that led to the suspicion.

Notify HR/Legal/IT-Security and review exposure

Recruitment fraud is an organizational threat that requires immediate cross-functional collaboration:

- Ensure that your IT team reviews the applicant's digital footprint for malware or phishing attempts.

- Consult with your legal department to understand any regulatory obligations, especially if the fake candidate had access to sensitive data.

- Then, conduct a thorough exposure review to determine if the actor compromised any internal systems.

Prevent repeat attacks (controls + training updates)

After neutralizing the immediate threat, focus on systemic improvements to prevent future onboarding fraud.

- Update your identity verification protocols based on the specific tactics used by the bad actor.

- Conduct a debriefing session with the talent acquisition team to review the incident and reinforce red flag training.

Continuous improvement of your hiring controls is the only way to stay ahead of evolving AI threats.

The Top AI-Enabled Hiring Fraud Schemes

AI-generated resumes, portfolios, and credentials

Many of these schemes are specifically designed to exploit gaps in background checks and workforce screening processes. Generative AI allows applicants to produce pristine, highly optimized resumes in seconds. Malicious actors can use these tools to completely fabricate work histories, educational backgrounds, and technical portfolios, as well as generate code snippets, design mockups, and writing samples that look completely authentic.

This type of recruitment fraud can overwhelm Applicant Tracking Systems (ATS) with seemingly perfect candidates, and when AI-generated credentials pass initial screens, companies can waste valuable time interviewing fraudulent individuals.

Fake job candidates and proxy interviewing

Proxy interviewing involves one person securing the job on behalf of another; usually, this looks like a highly skilled individual conducting the technical interview, while a less qualified person actually shows up for work.

This scheme relies heavily on remote environments where physical identity verification is lax. These fake candidates secure lucrative roles, only to underperform drastically or, worse, act as insider threats.

Onboarding fraud (payroll setup, access provisioning, account takeover)

Once an offer is accepted, the threat actor pivots to onboarding fraud, where the primary goal is often to route a sign-on bonus or initial paychecks to untraceable accounts. They also target the access provisioning process, with the aim of acquiring corporate email addresses and VPN access.

With legitimate credentials, they can execute account takeovers and navigate the corporate network undetected.

Identity theft and synthetic identity tactics

Scammers frequently leverage stolen Personally Identifiable Information (PII) to commit identity theft during the application process, or use synthetic identity tactics (i.e., combining a real Social Security Number with a fake name and address). This way, scammers can create a completely new, untraceable persona. Traditional screening methods often struggle to detect these synthetic identities because the data fragments appear legitimate in isolation.

When to Add Verification Steps

High-risk roles and privileged access

Not all roles carry the same level of risk. Positions that require privileged access to financial systems, customer databases, or proprietary source code demand more stringent identity verification than lower-level positions.

For higher-risk roles, HR teams should consider implementing advanced biometric checks and continuous monitoring. A single compromised account with administrative privileges can cause catastrophic damage to an organization.

Remote hiring and distributed teams

When you never meet an employee in a physical office, your digital verification processes must be as flawless as possible. Organizations with heavy remote footprints should consider mandatory online identity verification at multiple stages of the candidate journey. journey.

Third-party recruiters and staffing partners

Relying on third-party recruiters and staffing agencies introduces a layer of abstraction into the hiring process, and while these partners provide valuable talent, companies cannot blindly trust their vetting procedures. Employers should consider establishing clear service-level agreements that detail the exact identity verification solutions required by the agency, and audit their staffing partners regularly.

Hiring Fraud Prevention Controls by Stage (a Practical Checklist)

Implementing layered defenses is critical to stopping workforce screening and recruitment fraud. The following checklist outlines stage-based controls.

Application stage controls

- Deploy device fingerprinting to flag multiple applications from the same IP address.

- Monitor for rapid, automated form submissions indicative of bot activity.

- Utilize preliminary data enrichment to cross-reference basic contact information.

Interview stage controls

- Require candidates to use high-quality video during all remote interviews.

- Implement live, interactive technical assessments rather than take-home tests.

- Train interviewers to look for deepfake artifacts and audio desyncs.

Offer stage controls

- Initiate comprehensive pre-employment background screening, including background checks and identity verification, before finalizing any offer.

- Mandate strict identity verification services to match the applicant to their provided ID.

- Confirm past employment through authoritative sources, not just provided references.

Onboarding stage controls

- Enforce multi-factor authentication (MFA) immediately upon issuing corporate credentials.

- Verify bank account ownership details before initiating any payroll setup.

- Conduct a live video welcome call on day one to re-verify the employee's face.

First 30 days controls

- Audit system access logs for unusual download patterns or off-hours activity.

- Monitor employee productivity and engagement metrics against expected baselines.

- Restrict access to highly sensitive data repositories until the probationary period ends.

Frequently Asked Questions about AI Hiring Fraud

Hiring fraud encompasses any deceptive practice used to gain employment unlawfully. This includes fabricating credentials, using stolen identities, or employing proxies to complete technical interviews.

A deepfake interview uses AI to alter a candidate's appearance or voice in real-time. HR can detect it by looking for visual blurring around the face, audio-video synchronization issues, and asking the candidate to perform unexpected physical movements during the call.

Before onboarding, HR should verify the candidate's government-issued identity, right to work, educational credentials, and past employment. Verifying bank account ownership is also critical to prevent onboarding fraud and payroll diversion.

Identity verification confirms the applicant is who they say they are, often using biometrics and document scanning. Pre-employment background checks review the verified individual's history, including criminal records and past employment.

Pre-employment background checks reduce hiring risk by validating candidate claims and uncovering potential red flags, such as criminal histories relevant to the role. They act as a critical layer of defense against negligent hiring.

If you suspect a fake candidate, immediately pause their application process without notifying them. Preserve all digital evidence and escalate the issue to your IT security and legal teams for a thorough exposure review.

Hiring fraud is a rapidly growing threat, largely accelerated by remote work models and accessible generative AI tools. But there has been a significant increase in suspicious activity involving deepfake media used to circumvent identity verification methods.

Because malicious actors can easily use synthetic identities and AI to fabricate credentials at scale, organizations across all industries should consider implementing stronger defenses to combat the rising volume of fraudulent applicants attempting to infiltrate their systems.

Combating AI Hiring Fraud With DISA Global Solutions

In the era of generative AI, the traditional methods of vetting applicants are no longer sufficient, as deepfake interviews and synthetic profiles have fundamentally changed the risk landscape. By implementing identity verification solutions and comprehensive employment screening, your HR teams can protect their organizations from the severe financial and security impacts of hiring fraud.

At DISA Global Solutions, we understand the complexities of managing compliance and safety across diverse industries. Our comprehensive screening services are designed to support your HR teams in their journey toward a secure, fraud-free hiring process. We provide the resources you need to optimize your onboarding operations, reduce hiring risks, and improve organizational safety.

With DISA's comprehensive support, you can confidently navigate the complexities of AI-driven recruitment fraud and protect your business from liability.

DISA Global Solutions aims to provide accurate and informative content for educational purposes only and does not constitute legal advice. The reader retains full responsibility for the use of the information contained herein. Always consult with a professional or legal expert.